Using IRQbalance to Improve Network Throughput in XenServer

If you are running XenServer 5.6 FP1 or later, there is a little trick you can use to improve network throughput on the host.

By default, XenServer uses the netback process to process network traffic, and each host is limited to four instances of netback, with one instance running on each of dom0’s vCPUs. When a VM starts, each of its VIFs (Virtual InterFaces) is assigned to a netback instance in a round-robin fashion. While this results in a pretty even distribution of VIFs-to-netback processes, it is extremely inefficient during times of high network load because the CPU is not being fully utilized.

For example, suppose you have four VMs on a host, with each VM having one VIF each. VM1 is assigned to netback instance 0 which is tied to vCPU0, VM2 is assigned to netback instance 1 which is tied to vCPU1, and so on. Now suppose VM1 experiences a very high network load. Netback instance 1 is tasked with handling all of VM1’s traffic, and vCPU0 is the only vCPU doing work for netback instance 1. That means the other three vCPUs are sitting idle, while vCPU0 does all the work.

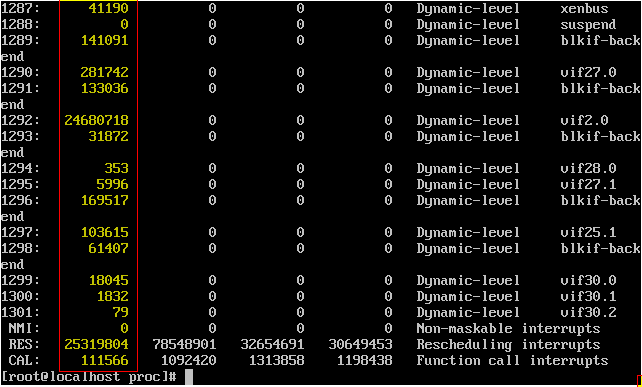

You can see this phenomenon for yourself by doing a cat /proc/interrupts from dom0’s console. You’ll see something similar to this:

(The screenshot doesn’t show it, but the first column of highlighted numbers is CPU0, the second is CPU1, and so on. The numbers represent the quantity of interrupt requests.)

If you’ve ever troubleshot obscure networking configurations in the physical world, you’ve probably run into a router or firewall whose CPU was being asked to do so much that it was causing a network slowdown. Fortunately in this case, we don’t have to make any major configuration changes or buy new hardware to fix the problem.

All we need to do to increase efficiency in this scenario is to evenly distribute the VIFs’ workloads across all available CPUs. We could manually do this at the bash prompt, or we could just download and install irqbalance.

irqbalance is a linux daemon that automatically distributes interrupts across all available CPUs and cores. To install it, issue the following command at the dom0 bash prompt:

You can either restart the host or manually start the service/daemon by issuing:

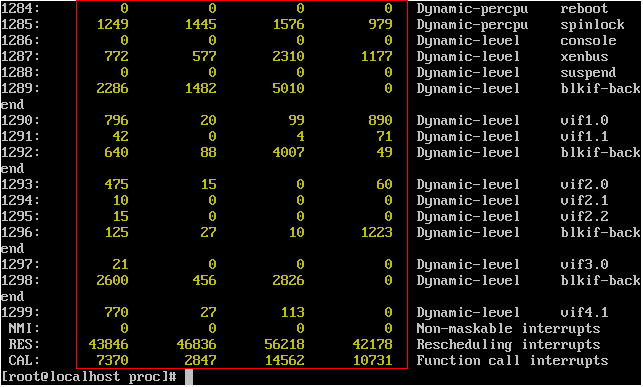

Now restart your VMs and do another cat /proc/interrupts. This time you should see something like this:

That’s much better! Try this out on your test XenServer host(s) first and see if you can tell a difference. Citrix has a whitepaper titled Achieving a fair distribution of the processing of guest network traffic over available physical CPUs (that’s a mouthful) that goes into more technical detail about netback and irqbalance.